The Architecture of Precision.

Data innovation is not a product of luck; it is an output of rigorous analytics engineering. We build high-performance big data platforms that treat data as code, ensuring every pipeline is versioned, tested, and observable.

Strict Adherence to

Resilient Systems

Our engineering culture is built on the belief that big data platforms must be as reliable as financial ledgers. We eliminate "black box" logic in favor of transparent, modular frameworks.

Modular Frameworks

Decoupled storage and compute for maximum elasticity.

CI/CD for Data

We treat data pipelines as software. Every transformation is unit-tested, peer-reviewed, and deployed through automated runners to minimize production regression.

Performance Tuning

Deep optimization of partitioning, clustering, and predicate pushdown to ensure sub-second latency on billion-row datasets.

Governance by Design

Integrated PII masking and role-based access control (RBAC) at the storage level, satisfying both technical and legal requirements.

Semantic Layering

Bridging the gap between raw lakehouse data and business visualization through robust, documented semantic modeling.

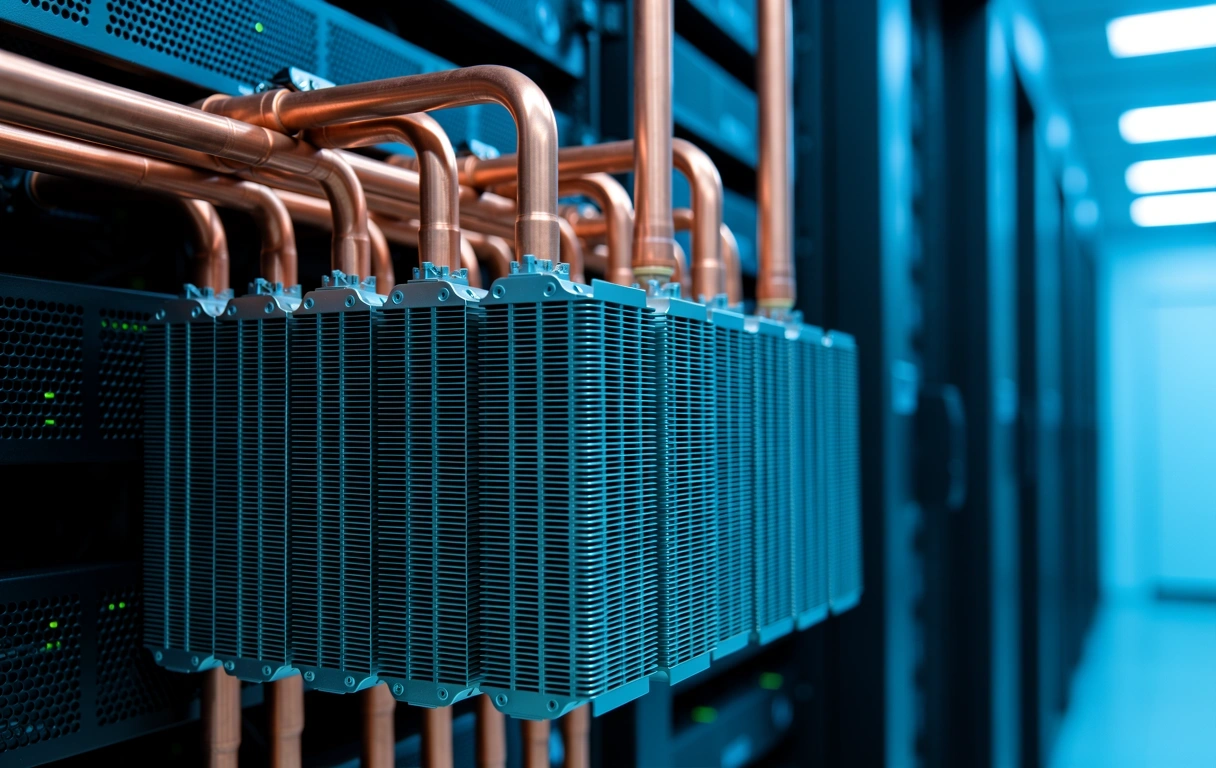

Scalability is Mandatory.

Infrastructure should never be the bottleneck of analytics engineering. We specialize in cloud-native and hybrid deployments that scale horizontally without linear cost increases. By optimizing the underlying compute engines, we allow for genuine data innovation across your entire organization.

- ZERO-DOWN TIME SCHEMA EVOLUTION

- AUTOMATED METADATA HARVESTING

- COST-AWARE COMPUTE ALLOCATION

Engineering Lifecycle

From raw ingestion to actionable intelligence, our engineering process follows a deterministic sequence designed for reliability.

Immutable Ingestion

Preserving raw data integrity by enforcing write-once policies and strictly append-only staging zones for auditing.

Declarative Transformations

Defining "what" the data should look like rather than "how" to move it, using modern SQL-based engineering workflows.

Quality Gate Enforcements

Automated checks for nulls, uniqueness, and referential integrity before data reaches the consumption layer.

Observability & Lineage

End-to-end tracing of every data point to provide root-cause analysis within minutes during data incidents.

Ready to build your

Data Foundry?

Our team is based in Kadikoy, Istanbul, providing worldwide expertise in high-performance data engineering. Let's discuss your architectural challenges.

Foundry HQ

Kadikoy 150, Istanbul

Talk to us

+90 216 335 9922

info@turkishdatafoundry.digital